Before we compare HBM and DDR, we first need to understand DRAM, as both of these are simply advanced extensions of that core technology.

What is DRAM ?

DRAM (Dynamic Random Access Memory) is a type of volatile memory that stores individual bits of data in microscopic memory cells. Because it is volatile, it loses all stored data the moment power is disconnected. Unlike Static RAM (SRAM), which uses flip-flops to hold data and requires no refresh cycles. DRAM relies on a capacitor-based design. These capacitors store data as electrical charges that naturally leak away within milliseconds, requiring the system to constantly refresh them to prevent data loss.

SRAM and DRAM are volatile, while all types of disk storage (like HDDs) are non-volatile. Right ?

Answer: Yes, that is exactly right!

- Volatile memory chips are incredibly fast, but they are primarily used as a temporary working space.

- Non-volatile memory devices retain data even when the power is disconnected, making them ideal for persistent storage. While they are not as fast as volatile memory, they are built specifically for long-term data retention. Examples of non-volatile storage include SSDs, flash drives, HDDs, and ROM.

Is SRAM faster than DRAM ? If Yes, Why do we even manufacture DRAM ?

While SRAM outpaces DRAM in speed, it relies on up to six transistors per cell compared to DRAM’s single transistor. This structural complexity makes SRAM highly expensive and impractical for high-capacity, multi-gigabyte memory applications.

Real world implementations of both - SRAM and DRAM ?

SRAM is primarily implemented in CPU cache applications, specifically L1, L2, and L3 caches. In contrast, DRAM is utilized across a wider range of high-capacity applications, including main system memory (RAM), GPU VRAM, HBM etc.

Some trivia before we discuss HBM vs DDR in more detail.

Did you know how crucial rare earth minerals are to DRAM production?

Even though the finished memory chips don’t actually contain these materials, manufacturing them would be impossible without rare earths. Producing modern memory chips involves building microscopic 3D layers, and rare earth minerals are essential for polishing those layers perfectly flat. Specifically, a rare earth compound called ceria is heavily utilized in this process.

Image Source - Wikipedia

Image Source - Wikipedia

While usually appearing as dense, coarse powders in shades of brown or black, refined rare-earth oxides can also exhibit lighter colors, as demonstrated here.

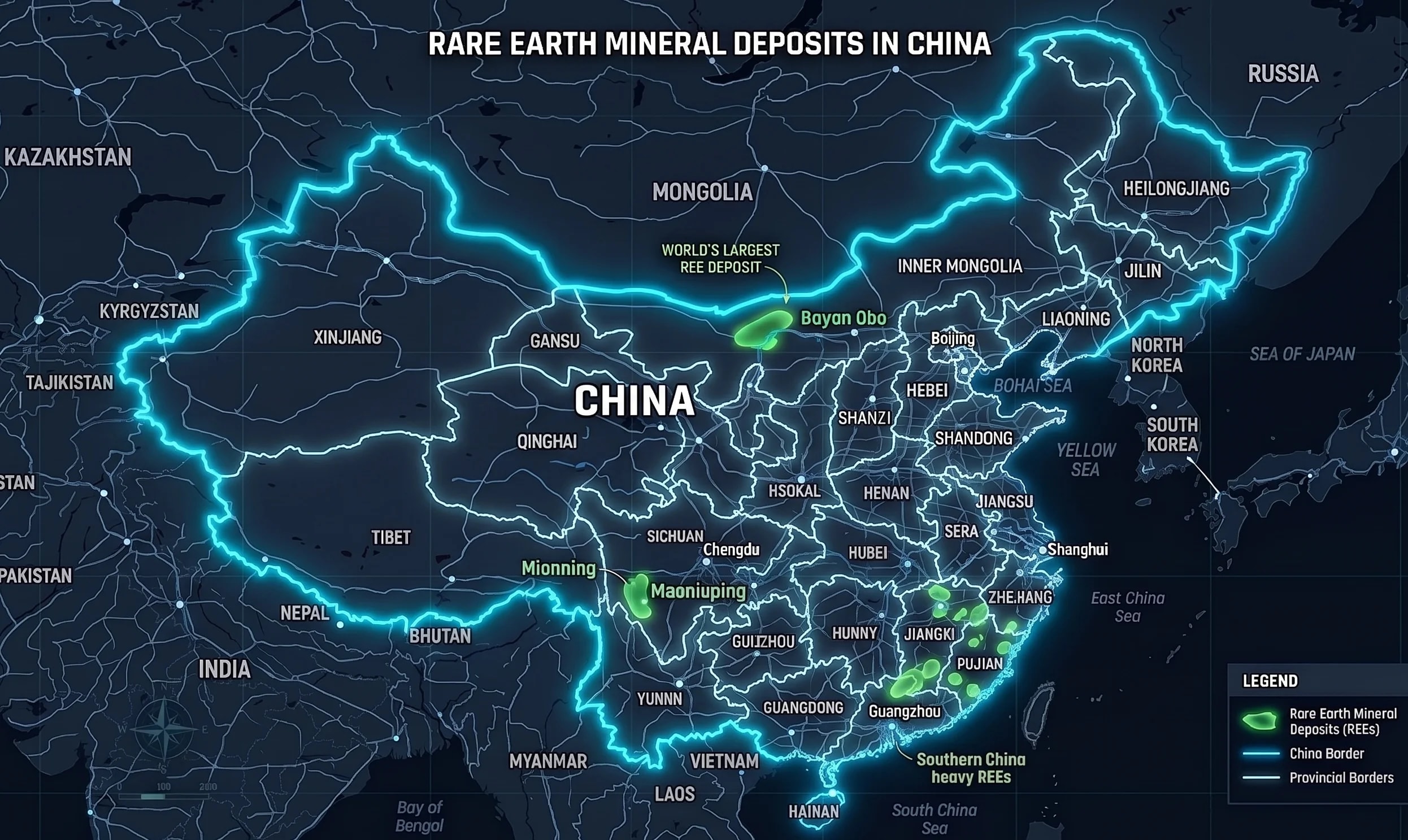

China dominates the rare earth industry as it holds the largest reserves by a margin. China contains approximately 44 million metric tons of these deposits, this is almost half of all known global reserves. This is absolute dominance. China also overwhelmingly dominates the manufacturing and refining of such minerals.

Okay, let’s get back to our original subject: HBM vs. DDR.

We now know these are both types of DRAM. So, what is the actual difference between them?

Architecture: HBM (High Bandwidth Memory) uses a 3D-stacked architecture to overcome the bandwidth limitations of traditional DDR (Double Data Rate) memory, which relies on a standard flat, 2D chip design.

Bus Width: A standard DDR memory channel features a 64-bit bus configuration, whereas a single HBM stack utilizes a massive 1024-bit bus. The upcoming HBM4 standard is expected to double this even further to 2048 bits per stack.

Purpose and Cost: DDR is built to provide large capacity at a reasonable cost. HBM is engineered specifically to feed massive volumes of data to processors instantly, making it significantly more expensive to manufacture.

Physical Distance: DDR plugs into motherboard DIMM slots located a few inches away from the processor. HBM, however, is integrated directly onto the same physical package as the CPU or GPU, drastically reducing the travel distance and lowering latency.

HBM Applications - GPUs / High performance CPUs

HBM, for the advantages mentioned above, finds its usage in GPUs and high-performance CPUs. DDR chips are not suitable for such intensive tasks as they are limited by a 64-bit bus. Trying to run a large AI model via these 64-bit routes is like trying to evacuate a 100,000-seater stadium through a small single door — Painful!

HBMs solve this problem as they can transfer Terrabytes of data/second with their super wide 1024 bit bus configurations. And since they are integrated directly into the CPU or GPU, the power consumption comes down drastically. This is one of the biggest advantages in a power hungry AI Datacenter ecosystem.

This concludes today’s post. Thank you for reading.